We are currently migrating our liquid level detection application to the newest hardware revision, but we’ve run into a critical issue with the Machine Learning stack.

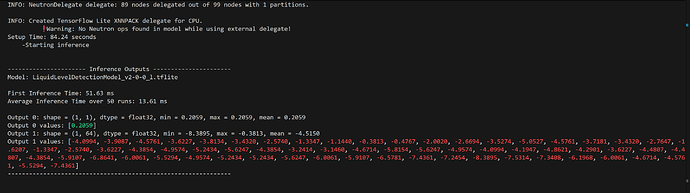

On the new Verdin iMX95 H 8GB WB IT V1.0B, our TFLite model output is failing silently.

We are using the exact same Python inference script, the exact same .tflite model, and the exact same input .jpg as we did on the V1.0A hardware. The script uses tflite_runtime and successfully loads the /usr/lib/libneutron_delegate.so external delegate.

However, on the V1.0B board, the NPU returns a completely static, incorrect output (a flat probability distribution) for every single inference, regardless of the input image provided.

Here is the tdx-info for the failing V1.0B setup:

PlaintextSoftware summary Bootloader: U-Boot Kernel version: 6.6.119-7.5.0-devel #1 SMP PREEMPT Mon Jan 5 09:23:13 UTC 2026 Kernel command line: root=PARTUUID=cb6ffc87-02 ro rootwait console=tty1 console=ttyLP2,115200 Distro name: NAME="TDX Wayland with XWayland" Distro version: VERSION_ID=7.5.0-devel-20251222135345-build.0 Hostname: verdin-imx95-12594956

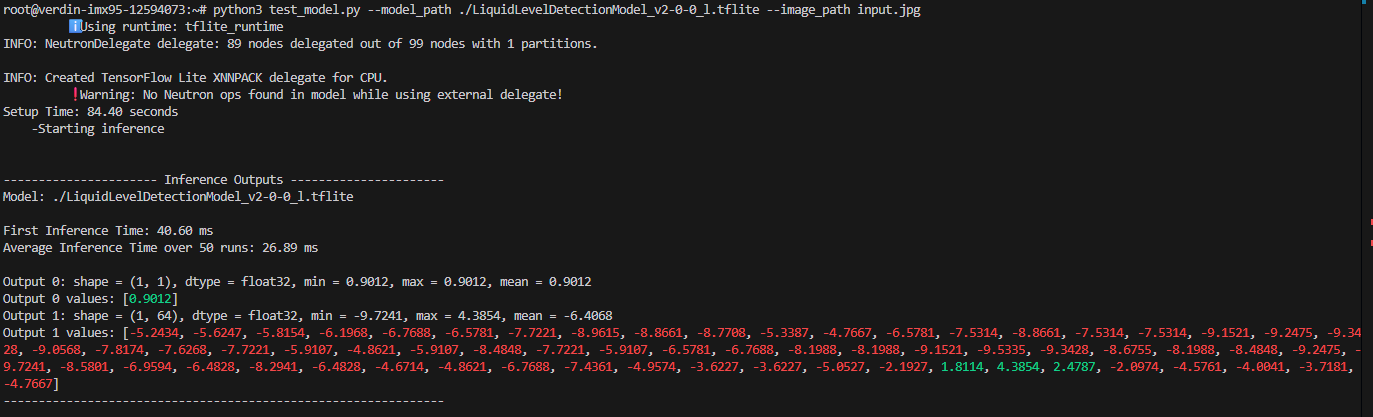

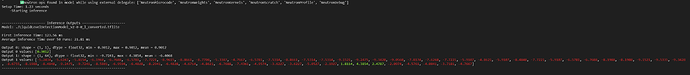

For comparison, here is the exact same pipeline running perfectly on our older V1.0A board:

Here is the tdx-info for the working V1.0A setup:

PlaintextSoftware summary Bootloader: U-Boot Kernel version: 6.6.101-7.4.0-devel #1 SMP PREEMPT Thu Sep 25 07:49:28 UTC 2025 Kernel command line: root=PARTUUID=3cb8eaf4-02 ro rootwait console=tty1 console=ttyLP2,115200 Distro name: NAME="TDX Wayland with XWayland" Distro version: VERSION_ID=7.4.0-devel-20251222121713-build.0 Hostname: verdin-imx95-12594073

Questions:

- Are there known regressions or architectural changes to the Neutron NPU driver/delegate between the two version that would cause an unconverted

.tflitemodel to fail silently? - Could you provide a link to a known-good Reference OS Image for the V1.0B hardware that includes a working eIQ/Neutron stack? We would like to re-flash the board to completely rule out an issue with our specific Yocto build.