i rudhi,

I have made progress on this issue with the help of NXP.

I confirm, model on Eiq use classification Model Mobilenet_v2 ( input Size 224,224,3)

My environment

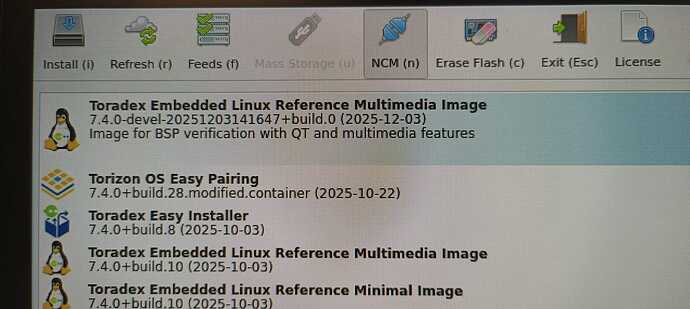

- Hardware: Verdin iMX95

- BSP: Toradex Linux

- Kernel:

Linux 6.6.94-7.4.0-devel

- Neutron driver: built into kernel

- Neutron delegate:

libneutron_delegate.so

Confirmed with NXP

NXP confirmed to me:

- neutron-converter is not required for Linux 6.6

- It is only mandatory starting from Linux 6.12

- Running a standard

.tflite model with libneutron delegate should work on i.MX95

- They successfully run inference on their i.MX95 EVK

- But if i use neutron converter (C:\nxp\eIQ_Toolkit_v1.17.0\bin\neutron-converter\Linux_6.6.36_2.1.0) i have the same problem ( error 442)

I successfully exported my model in INT8 PTQ from eIQ Portal and ran Neutron converter using:

neutron-converter.exe --input <model>.tflite --target imx95

The conversion completes, but the report shows:

Number of operators imported = 65

Number of operators optimized = 90

Number of operators converted = 81

Number of operators NOT converted = 9

Neutron graphs = 4

Operator conversion ratio = 0.9

So 9 operators are not converted by Neutron.

What is working on my side

- Standard TFLite model runs correctly on CPU

- Neutron delegate loads correctly

- TFLite tensors are correct (INT8)

- Graph is delegated successfully:

NeutronDelegate delegate: 62 nodes delegated out of 65

Issue on Verdin iMX95

When inference is executed on NPU:

fail to create neutron inference job

internal fault 442

Node number XX (NeutronDelegate) failed to invoke

This happens:

- after delegate loading,

- after graph partition,

- at first inference execution.

Fallback to CPU works immediately.

Since NXP confirms that Neutron works on i.MX95 EVK without neutron-converter on Linux 6.6, this seems specific to the Toradex BSP integration.

Could you please advise:

- Is Neutron officially validated on Verdin iMX95 with Linux 6.6?

- Which Neutron version / firmware / BSP combination is supported?

- Is there any known issue with Neutron runtime on Toradex BSP?

- Is a microcode / firmware update required?

If i use converted neutron tflite or not converted, there is the same error 442.

log error

[BACKEND] AUTO mode: NPU if available, fallback to CPU on error

[NPU] Attempting to load Neutron delegate…

**[NPU] :check_mark: Neutron delegate loaded successfully.**

**INFO: NeutronDelegate delegate: 62 nodes delegated out of 65 nodes with 2 partitions.**

INFO: Created TensorFlow Lite XNNPACK delegate for CPU.

[TFLITE] Backend actif : NPU

[TFLITE][Tensor] input0: idx=0 shape=[ 1 224 224 3] dtype=<class ‘numpy.int8’> quant_scale=0.007843137718737125 quant_zp=-1

[TFLITE][Tensor] output0: idx=172 shape=[ 1 16] dtype=<class ‘numpy.int8’> quant_scale=0.00390625 quant_zp=-128

[TFLITE] Ops délégués : 0 / 68

Track 0

**[TFLITE][Invoke:NPU] shape=(1, 224, 224, 3) dtype=int8**

**fail to create neutron inference job**

**Error: component=‘Neutron Driver’, category=‘internal fault’, code=442**

** [TFLITE ERROR] backend=NPU, exception=/usr/src/debug/tensorflow-lite-neutron-delegate/2.16.2/neutron_delegate.cc:261 neutronRC != ENONE (113203 != 0)Node number 65 (NeutronDelegate) failed to invoke.**

→ Fallback CPU : réinitialisation de l’interpréteur sans delegate.

[TFLITE] Backend actif : CPU

[TFLITE][Tensor] input0: idx=0 shape=[ 1 224 224 3] dtype=<class ‘numpy.int8’> quant_scale=0.007843137718737125 quant_zp=-1

[TFLITE][Tensor] output0: idx=172 shape=[ 1 16] dtype=<class ‘numpy.int8’> quant_scale=0.00390625 quant_zp=-128

[TFLITE] Ops délégués : 0 / 66

[TFLITE][Invoke:CPU] shape=(1, 224, 224, 3) dtype=int8

other,

Even the official Toradex TensorFlow Lite example using

mobilenet_v1_1.0_224_quant.tflite fails on our i.MX95 board with :

- Neutron internal fault 442

- fail to create neutron inference job

The delegate loads correctly and nodes are delegated, but inference fails at runtime.

root@verdin-imx95-12594079:/usr/bin/tensorflow-lite-2.16.2/examples# python3 label_image.py -i grace_hopper.bmp -m mobilenet_v1_1.0_224_quant.tflite -i grace_hopper.bmp -l labels.txt -e /usr/lib/libneutron_delegate.so

Loading external delegate from /usr/lib/libneutron_delegate.so with args: {} INFO: NeutronDelegate delegate: 29 nodes delegated out of 31 nodes with 1 partitions. INFO: Created TensorFlow Lite XNNPACK delegate for CPU. fail to create neutron inference job Error: component=‘Neutron Driver’, category=‘internal fault’, code=442 Traceback (most recent call last): File “/usr/bin/tensorflow-lite-2.16.2/examples/label_image.py”, line 120, in interpreter.invoke() File “/usr/lib/python3.12/site-packages/tflite_runtime/interpreter.py”, line 941, in invoke self._interpreter.Invoke() RuntimeError: /usr/src/debug/tensorflow-lite-neutron-delegate/2.16.2/neutron_delegate.cc:261 neutronRC != ENONE (113203 != 0)Node number 31 (NeutronDelegate) failed to invoke.