Just fired up torizoncore-builder after about a month and get this error trying to build a new image:

$ torizoncore-builder build

Building image as per configuration file 'tcbuild.yaml'...

=>> Handling input section

Fetching URL 'https://artifacts.toradex.com/artifactory/torizoncore-oe-prod-frankfurt/dunfell-5.x.y/release/10/apalis-imx6/torizon-upstream/torizon-core-docker/oedeploy/torizon-core-docker-apalis-imx6-Tezi_5.4.0+build.10.tar' into '/tmp/torizon-core-docker-apalis-imx6-Tezi_5.4.0+build.10.tar'

[========================================]

Download Complete!

Downloaded file name: '/tmp/torizon-core-docker-apalis-imx6-Tezi_5.4.0+build.10.tar'

No integrity check performed because checksum was not specified.

Unpacking Toradex Easy Installer image.

Copying Toradex Easy Installer image.

Unpacking TorizonCore Toradex Easy Installer image.

Importing OSTree revision aac19948c59d43bb53d21f83274d58141ccc9cab414889c08582ca2f33b76a3f from local repository...

1091 metadata, 12751 content objects imported; 412.6 MB content written

Unpacked OSTree from Toradex Easy Installer image:

Commit checksum: aac19948c59d43bb53d21f83274d58141ccc9cab414889c08582ca2f33b76a3f

TorizonCore Version: 5.4.0+build.10

=>> Handling customization section

=> Setting splash screen

splash screen merged to initramfs

=> Handling device-tree subsection

=> Selecting custom device-tree 'device-trees/dts-arm32/imx6q-apalis-tarform-BIU-v01.dts'

'imx6q-apalis-tarform-BIU-v01.dts' compiles successfully.

warning: removing currently applied device tree overlays

Device tree imx6q-apalis-tarform-BIU-v01.dtb successfully applied.

=> Setting kernel arguments

'custom-kargs_overlay.dts' compiles successfully.

Overlay custom-kargs_overlay.dtbo successfully applied.

Kernel custom arguments successfully configured with "video=HDMI-A-1:800x800R@60e".

=>> Handling output section

Applying changes from STORAGE/splash.

Applying changes from STORAGE/dt.

Applying changes from WORKDIR/releaseCandidate.

Applying changes from WORKDIR/007.

Commit 50f3e96bf5039fed178c243ad501133cebf53d9d667c6122bd0245c8125e21c3 has been generated for changes and is ready to be deployed.

Deploying commit ref: founders-dev-branch

Pulling OSTree with ref founders-dev-branch from local archive repository...

Commit checksum: 50f3e96bf5039fed178c243ad501133cebf53d9d667c6122bd0245c8125e21c3

TorizonCore Version: 5.4.0+build.10-tcbuilder.20220603143912

Default kernel arguments: quiet logo.nologo vt.global_cursor_default=0 plymouth.ignore-serial-consoles splash fbcon=map:3

1099 metadata, 12912 content objects imported; 467.8 MB content written

Pulling done.

Deploying OSTree with checksum 50f3e96bf5039fed178c243ad501133cebf53d9d667c6122bd0245c8125e21c3

Deploying done.

Copy files not under OSTree control from original deployment.

Packing rootfs...

Packing rootfs done.

Updating TorizonCore image in place.

Bundling images to directory bundle_20220603143926_850171.tmp

Removing output directory 'v2-BIU-rc2' due to build errors

An unexpected Exception occured. Please provide the following stack trace to

the Toradex TorizonCore support team:

Traceback (most recent call last):

File "/builder/torizoncore-builder", line 213, in <module>

mainargs.func(mainargs)

File "/builder/tcbuilder/cli/build.py", line 469, in do_build

build(args.config_fname, args.storage_directory,

File "/builder/tcbuilder/cli/build.py", line 455, in build

raise exc

File "/builder/tcbuilder/cli/build.py", line 444, in build

handle_output_section(

File "/builder/tcbuilder/cli/build.py", line 300, in handle_output_section

handle_bundle_output(

File "/builder/tcbuilder/cli/build.py", line 348, in handle_bundle_output

"host_workdir": common.get_host_workdir()[0],

File "/builder/tcbuilder/backend/common.py", line 456, in get_host_workdir

container_id = get_own_container_id(docker_client)

File "/builder/tcbuilder/backend/common.py", line 440, in get_own_container_id

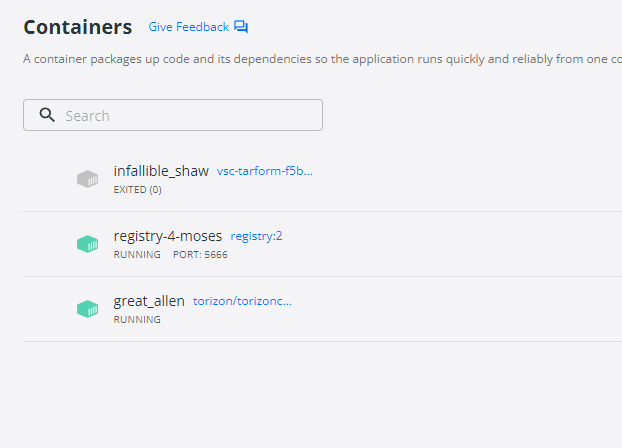

assert container_id is None, \

AssertionError: Found more than one *torizoncore-builder* container